Aditya Grover

CTO

The next wave of personal computing isn't about better apps; it's about agents that live on your behalf: managing your calendar, triaging your inbox, tracking your finances, keeping your notes in order.

For agents to work in production, three things have to be true at once: the model has to be accurate, it has to be fast, and it has to be cheap enough to run continuously. Most models optimize for one or two of these. Mercury 2 optimizes for all three.

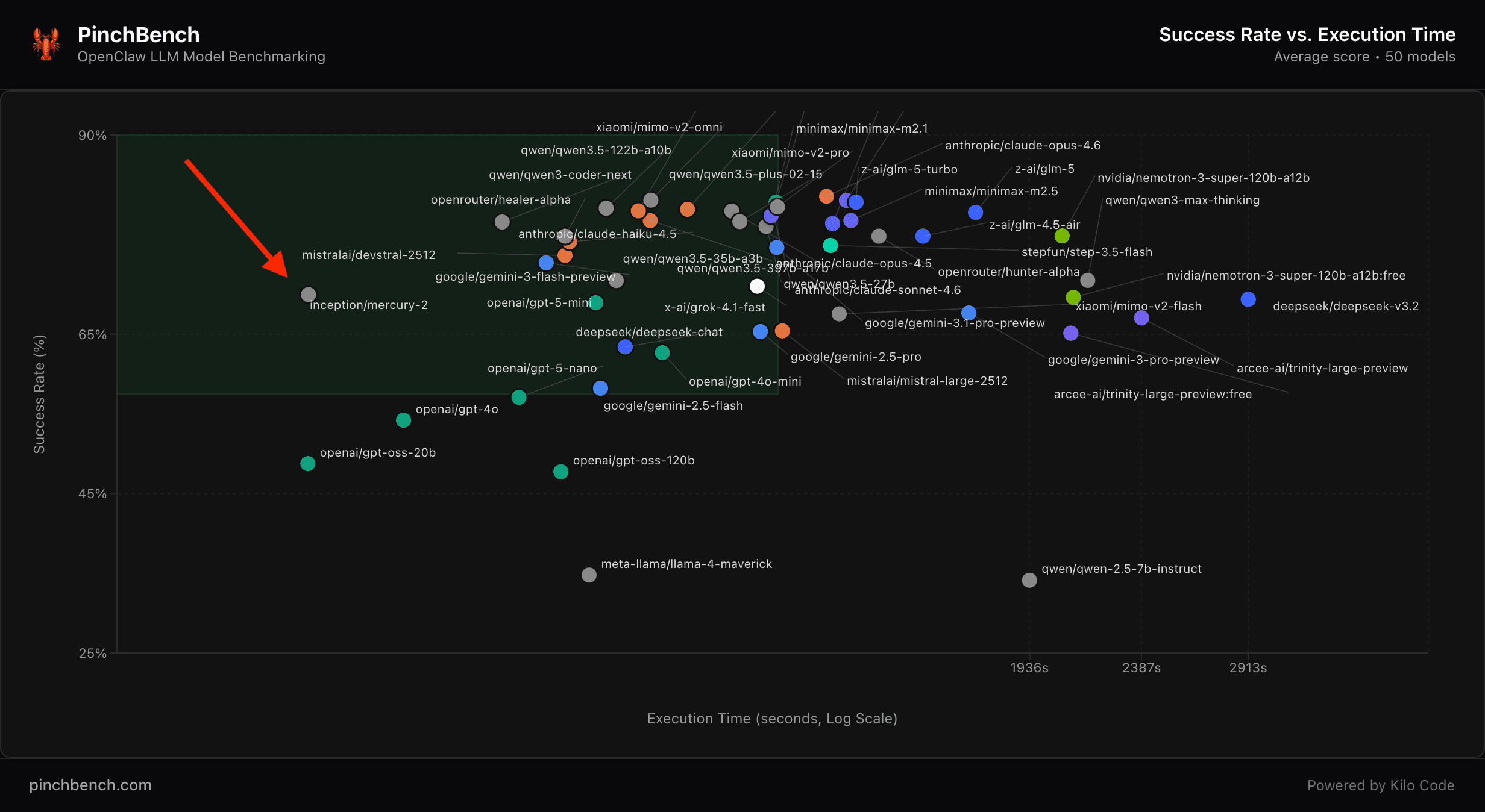

We evaluated Mercury 2 on PinchBench, the open-source benchmark built on top of OpenClaw — the fastest-growing open-source project in GitHub history, with 250K+ stars in under 60 days. Here's what we found.

The result

Mercury 2 sits in the upper-left of the chart: high task success rate and the fastest execution time in its performance class.

78% success rate — matching or exceeding GPT-5 Mini (75%), Gemini 2.5 Flash (71%), DeepSeek Chat (72%), and GPT-4o (71%).

Fastest execution time — completing agentic tasks faster than every model at comparable accuracy, and faster than most models at any accuracy level.

<$1 / million tokens — $0.25 per 1M input, $0.75 per 1M output. Roughly 4x cheaper than Claude 4.5 Haiku on input, and 4x cheaper on output.

Mercury 2 defines the Pareto frontier: competitive task performance, industry-leading speed, at a price point that makes continuous agent operation viable.

What is PinchBench?

PinchBench measures how well LLMs perform as the brain of an OpenClaw agent. Instead of testing isolated capabilities, it evaluates real agent workflows: scheduling meetings, triaging email, researching topics, managing files, and writing code.

It tests what actually matters for production agents:

Tool usage — Can the model call the right tools with the right parameters?

Multi-step reasoning — Can it chain actions to complete complex tasks?

Real-world messiness — Can it handle ambiguous instructions and incomplete information?

Practical outcomes — Did it actually create the file, send the email, or schedule the meeting?

Crucially, PinchBench assesses the joint tradeoff between quality, cost, and latency — making it explicit whether a model is viable for continuous, real-world agent deployment.

Why this matters for agents

Agents run dozens of inference calls per task. Latency compounds across every step. Cost compounds across every hour. A model that's accurate but slow is a demo; a model that's fast but expensive doesn't scale to continuous operation.

Mercury 2's speed comes from a fundamentally different technical approach — parallel refinement instead of token-by-token generation. The speed advantage is native to the model, not dependent on specialized hardware or compression. Reasoning-grade quality, real-time latency, standard GPUs.

Try Mercury 2

If you’re doing an enterprise evaluation, we’ll partner with you on workload fit, eval design, and performance validation under your expected serving constraints.